We build interpretable AI to replace the black box.

Our models bring transparency in situations where the reasoning behind a decision carries the same weight as the decision itself. We work in close collaboration with teams in banking, financial services, and insurance.

Models don't explain their reasoning. They generate plausible accounts of it. Existing explanation tools were built for an older generation of architectures and don't extend faithfully to the models being deployed today. As AI moves from pilot to production, that gap becomes the difference between a model that ships and one that stalls.

For organizations in banking, financial services, and insurance, the stakes are higher still. Regulators, auditors, and customers expect answers. These teams know that a decision without a reason is not a decision you can stand behind.

We work with your team to understand your current systems and build a model that targets a critical decision point in an agentic workflow. When the agent needs to make an explainable decision based on unstructured input, it relies on our decision model.

Every decision comes with an audit trace revealing interpretable features that led to the decision. These traces can be inspected manually or through a conversational interface that allows stakeholders to ask questions about the reasons for the model's choices.

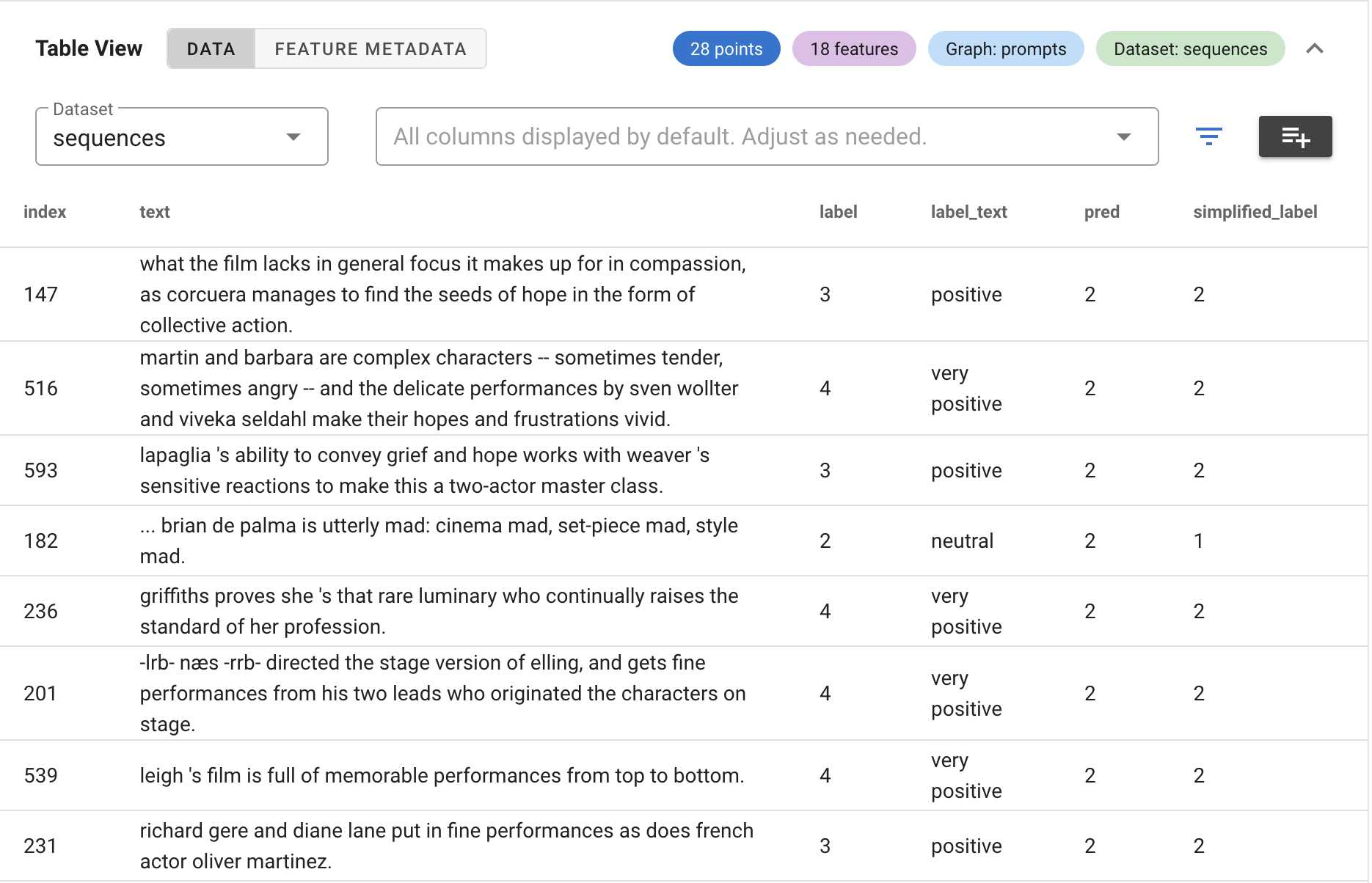

Cobalt is our interpretability platform that allows data teams to dig deeper into model internals and behavior.

We partner with teams in banking, financial services, and insurance to bring interpretability to the AI workflows where it matters most.

Operational savings from applying AI to fraud triage depend on scaling auto-close decisions. That requires a per-case attribution record at the point of disposition. We build the model that produces it.

When human agents follow the AI recommendation most of the time, the model's reasoning needs to be documented. We provide the per-decision attribution record that risk and compliance teams need to see.

Automated lending decisions need transparent reasoning, particularly when built on non-traditional data. We surface patterns in model behavior that post-hoc tools miss.

When a model draws a conclusion from months of transaction history, you need confidence the conclusion was driven by the data. We make that reasoning visible and auditable.

Our approach combines two of the most rigorous methods for understanding AI systems: Topological Data Analysis and Mechanistic Interpretability.

TDA reveals the shape of high-dimensional model behavior without imposing assumptions. It surfaces clusters, transitions, and failure modes that standard evaluation misses.

We decompose model activations into interpretable features using sparse autoencoders and cross-layer transcoders. We map the circuits and concepts inside your model: not just what it predicts, but why, at the level of internal representations.

Together, these methods produce models whose reasoning is structurally transparent. The result is interpretability that compliance and risk teams can verify, not just trust.

Our cross-layer transcoders for Qwen3 models reveal interpretable concepts inside an LLM's mind. These concepts can be connected together to trace computational circuits, revealing how the model produces outputs from individual prompts.

With TDA, we can map relationships between features and concepts to show how they combine in hierarchical ways.

Cobalt is our TDA-powered engine for AI interpretability. It helps data teams discover, inspect, and verify AI behavior.

With Cobalt, teams can uncover connections between model inputs, interpretable features, and model decisions to support a holistic approach to model understanding. By helping understand the model's internal representations and their relationship with the model's behavior, Cobalt gives compliance and technical stakeholders the transparency they need to deploy trustworthy AI.

Install: pip install cobalt-ai

Sachin Khanna

CEO

Gunnar Carlsson

Founder

Jakob Hansen

Head of Data Science

John Carlsson

Principal Scientist

David Fooshee

Principal Scientist

Founded by Dr Gunnar Carlsson, one of the inventors of Topological Data Analysis at Stanford. The founding team combines pioneering research in TDA and mechanistic interpretability with decades of enterprise software execution across global organizations.

Our advisory board brings deep BFSI credibility spanning tier-1 banking CTOs, AI governance leadership at global financial institutions, and PhD-level expertise in explanation-based AI. We navigate both the scientific complexity of interpretability and the operational reality of deploying AI in high-stakes environments.